ClarifAI: Free AI-Powered Code Analysis for Visual Studio Code

A free, privacy-focused VSCode extension that uses local Ollama AI models to explain code and suggest enhancements directly inside your editor. No cloud uploads, no subscriptions, works offline with any programming language.

Project Overview

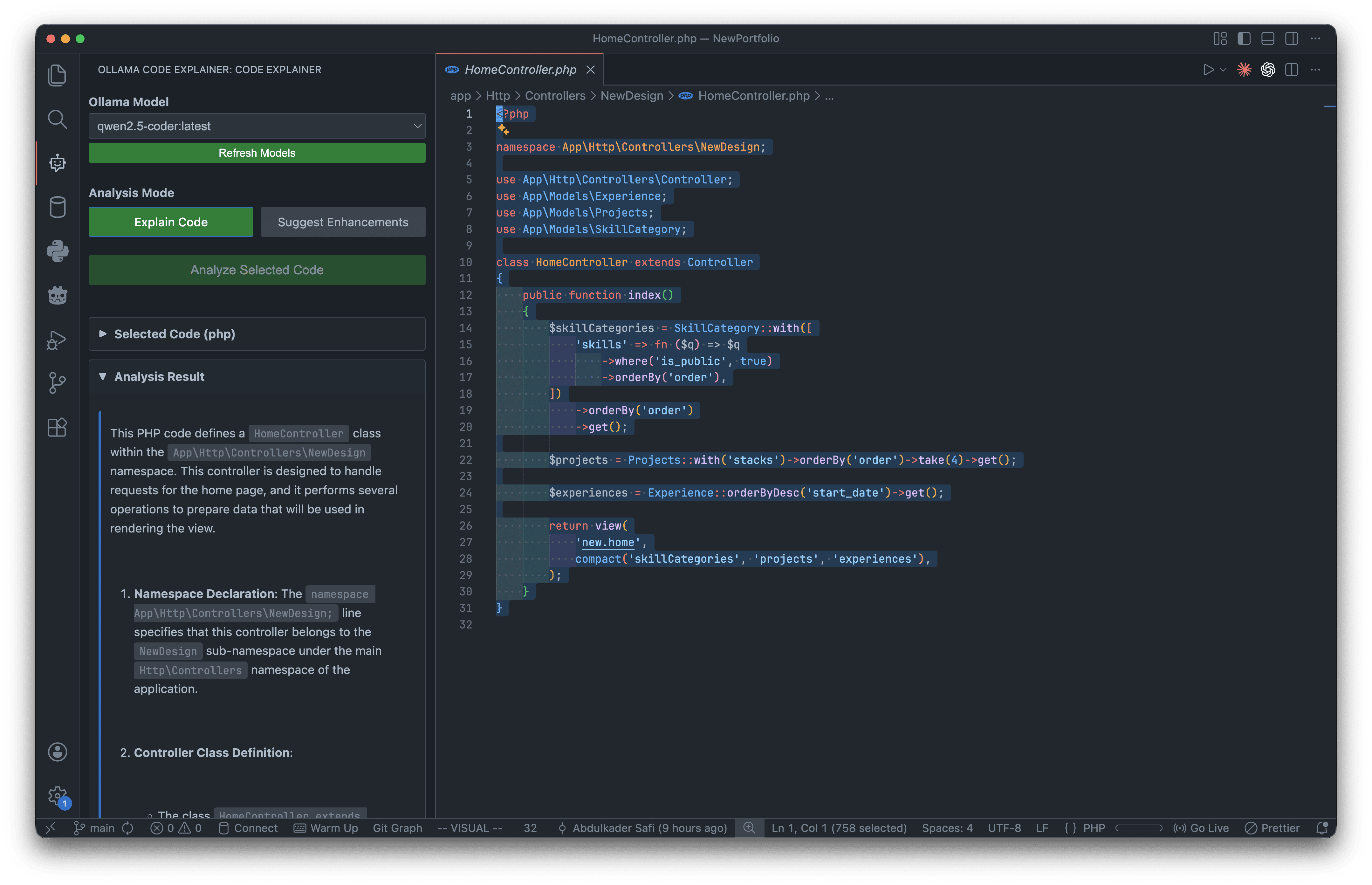

ClarifAI is a Visual Studio Code extension that brings AI-powered code analysis into the IDE without sending a single line of code to the cloud. Built with TypeScript and VSCode's Webview API, it connects to Ollama running locally to provide two core analysis modes: detailed code explanations in plain English and smart enhancement suggestions covering quality, performance, and readability.

The extension features real-time streaming responses, a clean sidebar panel with markdown rendering and syntax highlighting, automatic language detection, collapsible sections for organized output, and support for swapping between any installed Ollama model (deepseek-coder, codellama, mistral, etc.). It works with every programming language VSCode supports and requires zero accounts, API keys, or recurring costs.

Tech stack: TypeScript, VSCode Extension API, Ollama API, Webview API, esbuild, ESLint.

The Challenge

Understanding complex codebases is one of the most time-consuming parts of development. Jumping into unfamiliar projects, reviewing pull requests, revisiting your own code from months ago, or tracing logic through legacy systems with no documentation all require significant mental effort.

Cloud-based AI coding assistants like GitHub Copilot and Cursor solve parts of this, but they require sending proprietary code to external servers. For developers working with sensitive codebases, client projects, or environments where privacy is non-negotiable, that's a dealbreaker. On top of that, these tools come with monthly subscriptions and require constant internet access, making them inaccessible for offline work or cost-conscious developers.

The Solution

ClarifAI keeps everything local by running inference through Ollama on the developer's own machine. Select any code snippet, choose a model, pick an analysis mode, and the AI streams its response in real-time directly into a sidebar panel. The "Explain Code" mode breaks down what the code does with high-level summaries, function-by-function walkthroughs, data flow details, and security considerations. The "Suggest Enhancements" mode returns actionable improvements for input validation, error handling, performance, and readability.

The extension dynamically discovers all installed Ollama models, remembers the last-used model between sessions, and renders all output as formatted markdown with syntax-highlighted code blocks. Streaming means feedback starts appearing immediately rather than waiting for the full response to generate. The result is a tool that gives developers Copilot-level code understanding for free, fully offline, with their code never leaving their machine.

Project Info

Tech Stack

Let's Build Something Together

Whether you have a project idea, want to collaborate on a web or mobile app, or just say hello